AI Governance Isn't a Deployment Problem. It's a Discovery Problem.

Most enterprises think AI governance is about controlling what they adopt. The real problem is auditing what already arrived — inside tools they trust, tools they pay for, tools they approved years ago. The frameworks exist. The inventory does not.

Not long ago, the SVP of Product & Technology at a Fortune 500 company asked their procurement team to do something that would have seemed unusual two years ago: flag every tool in their software catalog that has AI or LLM capabilities. Not to block them. Not to start a review cycle. Just to know what they have.

That request is becoming more common across enterprise procurement and IT functions. The fact that it needs to happen at all points to a structural gap in how most organizations are thinking about AI risk.

Two realities, one organization

Most large enterprises are actively building toward using AI to manage risk. Fraud detection, compliance monitoring, and anomaly detection in financial workflows. The Workday and FT Longitude C-Suite AI Indicator Report, which surveyed 2,355 senior executives, found that financial services leaders ranked AI for fraud detection and compliance as their top organizational priorities; 98% of CEOs said there would be some immediate business benefit from implementing AI.

At the same time, AI has already arrived inside tools that those same enterprises approved, contracted, and trusted, often before anyone thought to ask whether it was there. This is not about employees going rogue or chatbots being misused. It is quieter than that. According to the 2025 SaaS Benchmarks Report by High Alpha, 92% of SaaS companies have launched or plan to launch AI features. Notion, Grammarly, Zoom, Salesforce. None of them were bought as AI tools. The AI came later, as a feature update, often enabled by default. A 2025 McKinsey report found that the percentage of organizations using generative AI in at least one business function jumped from 71% in 2024 to 88% in 2025, and that velocity is forcing IT to govern tools that are evolving rapidly under existing contracts.

Most enterprises are not deliberately ignoring AI risk. They just never built the tools to see where it already lives in their stack.

What the risk actually looks like

When a SaaS vendor ships an AI feature, they add a processing layer between the user and their data. That layer has its own external dependencies. The tool your IT team approved may now be sending data to an LLM provider your procurement team never evaluated. Your contract is with the vendor. What happens downstream depends on subprocessor agreements that most organizations have not revisited, agreements often written before large language models existed as a commercial reality.

There is also the problem of model behavior. Software ships with version numbers and patch notes. AI models get updated quietly. The tool approved in January may behave differently in September. Same contract, different model, different risk surface, no notification. Unlike a software update, there is no changelog for that.

Here is something that makes AI risk structurally different from traditional software risk: AI and humans do not process data the same way. A human reading a document reads what is there. An LLM processes the entire context window; it infers, it pattern-matches, it draws on statistical relationships from training data. It can surface information that was technically present but never intended to be surfaced. It can generate outputs that look authoritative but are wrong. And it does this at a speed and scale that makes human verification practically impossible at the enterprise level. Workday's own UX research team has noted that the probabilistic nature of AI systems may be a particular mismatch for financial users who rely on software to make critical decisions at speed, because the same input does not guarantee the same output. That is a fundamentally different risk profile from traditional software, and most governance frameworks were not designed for it.

The Workday and FT Longitude research puts the awareness gap into numbers: 62% of business leaders rank security and privacy as their top concern when it comes to AI adoption, yet only 4% say their data is fully accessible across their organization. IBM's own research found that only 24% of generative AI initiatives are currently secured. You cannot govern what you cannot see. And right now, most enterprises cannot fully see their AI exposure.

IT had a process for this. AI broke it.

Before a new software tool enters an enterprise environment, IT typically runs it through a procurement security review. Penetration testing. SOC 2 compliance checks. Data residency verification. Vendor risk assessments. Legal review of data processing agreements. These are established controls, built up over decades of managing software risk.

The problem is that those controls were designed for deterministic software, software that does what it is coded to do consistently, with traceable behavior. AI does not work that way. It is probabilistic. Its outputs are not guaranteed. Its behavior changes as underlying models are updated. And critically, it was not evaluated during the security review process. It arrived after the tool had already been approved. As Drata's analysis of AI risk management puts it, traditional risk management often focuses on static systems and known threats, while AI risk management must account for dynamic, evolving models that can behave unpredictably and interact with vast amounts of sensitive data. That is not a marginal difference. It is a category difference.

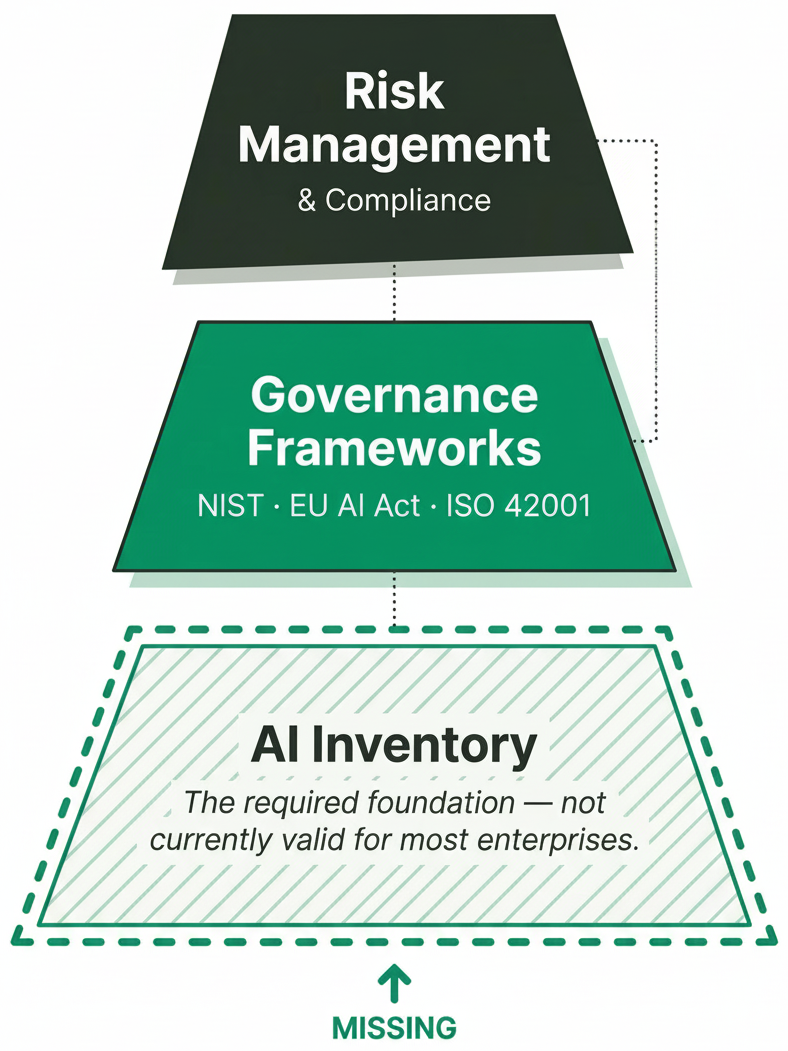

Frameworks are beginning to catch up. The NIST AI Risk Management Framework provides a structure for mapping, measuring, managing, and governing AI risk. The EU AI Act, which came into force in 2024, imposes explicit obligations on organizations that use high-risk AI systems, with Commission enforcement beginning in August 2026. ISO 42001 gives enterprises a certification path for responsible AI governance.

But here is the gap: every one of these frameworks lists mapping your AI use cases as step one. ISO 42001 Section 8.4 makes this a formal control requirement: organizations must maintain an inventory of AI systems and their purposes before any other governance standard can be applied. The NIST AI RMF structures the same requirement through its Map function, and the EU AI Act requires registration of high-risk systems before market placement. In each case, the starting assumption is that you already know what AI you have. Before you can tier your risk, apply a governance standard, or meet a compliance obligation, you need to know which tools in your stack contain AI. That assumption is not currently valid for most enterprises. The frameworks are ahead of the inventory.

The three vectors, and why all three matter

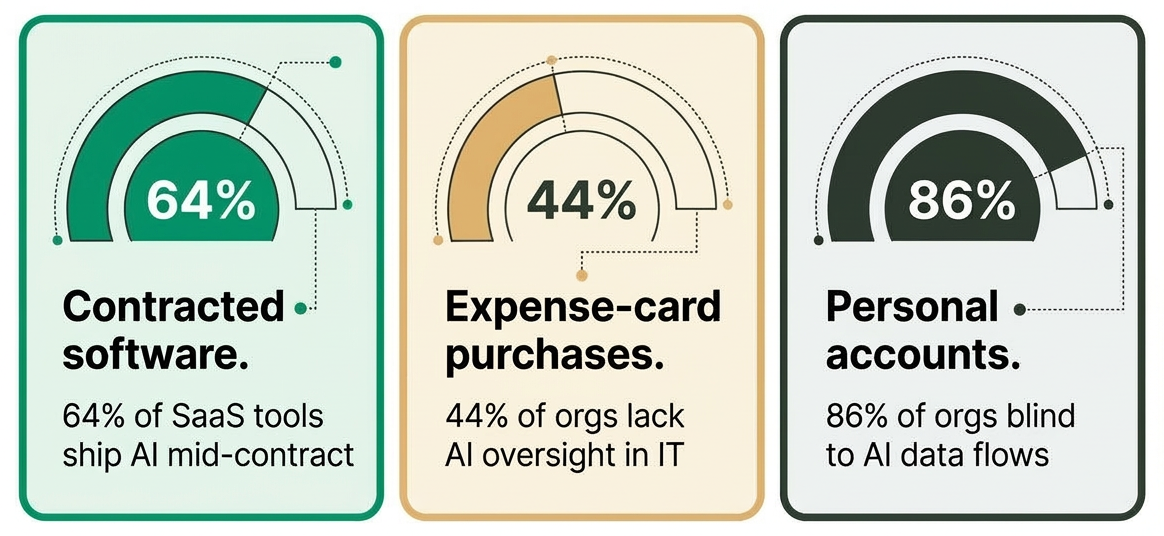

The Fortune 500 request cited earlier framed the problem across three distinct entry points. Each carries a different risk profile. Most governance frameworks address only the first one seriously.

Software under contract. Tools that IT approved, procurement signed off on, and finance pays for every month. These tools may have shipped with AI capabilities after the original security evaluation. Nobody flagged it because nobody was watching for it. According to the 2025 SaaS Benchmarks Report, 64% of SaaS companies now embed AI as a supporting feature, meaning AI capabilities are being added to tools mid-contract, without triggering a new procurement review.

Expense-card purchases. Tools purchased by individual employees or departments are typically outside formal procurement channels. These do not appear in the software catalog. The data flowing through them is invisible to IT and security teams. Delinea's 2025 AI in Identity Security report found that 44% of organizations struggle with business units deploying AI solutions without involving IT or security teams.

Personal accounts. Completely off the books. No contract, no data processing agreement, no visibility. According to the 2025 State of Shadow AI Report, nearly half of people using generative AI platforms do so through personal accounts that their organizations are not overseeing. The same report found that the average enterprise hosts 1,200 unauthorized applications, and that 86% of organizations are entirely blind to AI data flows.

Taken together, these three vectors paint a consistent picture. A WalkMe survey of 1,000 working adults in 2025 found that 78% reported using unapproved AI tools at work. UpGuard's November 2025 report put that figure higher; more than 80% of workers use unapproved AI tools, including nearly 90% of security professionals themselves. Less than 20% report using only company-approved AI.

78% of employees using unapproved tools is no longer a shadow IT problem. It is a parallel system running alongside the official stack.

AI is for everyone. So is the risk.

One reason shadow AI spreads faster than shadow IT ever did is how AI tools are marketed. Unlike enterprise software, which requires IT setup, configuration, and training, AI tools are explicitly designed to be accessible. They are marketed to both technical and non-technical users. No coding required. Works in plain language. Just describe what you want.

That accessibility is useful. It is also what makes governance difficult.

According to Workday's global study on AI and human potential, 83% of professionals familiar with AI believe it will augment human capabilities, leading to increased productivity and new forms of innovation. That optimism is widespread. But it coexists with a governance gap that almost nobody is talking about. The same Workday Responsible AI research found that only 22% of employees say their company has shared guidelines on the responsible use of AI, which is likely why only 52% of employees actually welcome AI in the workplace. Workday calls this the AI trust gap. The tools are arriving. The guidelines are not.

When an HR manager uses an AI tool to shortlist candidates, a finance analyst uses it to summarize contract terms, or a procurement lead uses it to benchmark vendor pricing, none of those actions requires technical knowledge. But all of them carry organizational risk if the AI output is wrong, biased, or based on data it should not have accessed.

Every AI vendor includes a disclaimer. AI can make mistakes. Please verify outputs. That is legally responsible language. It is also largely disconnected from how AI tools are actually used in practice. Nobody double-checks AI-summarized meeting notes. Nobody re-reads the contract the AI analyzed. The disclaimer exists. The verification behavior does not.

Workday's Beyond Productivity research, which surveyed 3,200 leaders and employees in November 2025, found that roughly 37% of the time saved by AI is offset by rework, employees correcting, clarifying, or rewriting low-quality AI-generated content. For every 10 hours of efficiency gained, nearly 4 hours are lost fixing output. Only 14% of employees consistently achieve net-positive outcomes from AI use. Dr. Kate Niederhoffer, chief scientist at BetterUp Labs, has a name for this: workslop. It is not a user failure. It is a structural one. AI has been layered onto roles that were never updated to accommodate it, with no clear guidance on where human judgment must apply.

Despite this, 77% of the most enthusiastic AI users in the same study reported checking AI output with the same or more rigor than human work, absorbing a massive hidden verification burden that no organization planned for.

At the individual level, that verification is an understandable shortcut. At the enterprise level, it is a transparency and accountability problem. When an AI tool produces a flawed analysis that drives a business decision (a wrong financial projection, a discriminatory hiring shortlist, a procurement recommendation based on hallucinated vendor data), the accountability chain is not clear. IBM identifies enterprise, pointing to wrongful arrests from facial recognition and fatal crashes involving autonomous systems as cases where policymakers and regulatory agencies are still working out liability. The vendor disclaims responsibility. The employee did not know how to verify. The organization bears the consequence. And IBM's own researcher notes directly: "if we don't have that trust in those models, we can't really get the benefit of that AI in enterprises."

A Komprise 2025 IT survey of enterprise IT leaders found that 90% are concerned about shadow AI from a privacy and security standpoint, and nearly 80% have already experienced a negative AI-related data incident. Concern is not the problem. Structural accountability is.

Why the current approach is not closing the gap

The financial consequences of ungoverned AI are now documented. According to IBM's 2025 Cost of Data Breach Report, shadow AI incidents now account for 20% of all breaches and carry a meaningful cost premium, $4.63 million per incident versus $3.96 million for standard breaches. Cisco's 2025 research found that 46% of organizations reported internal data leaks through generative AI, not through traditional exfiltration, but through employees prompting external models with sensitive context.

Despite all of this, only 22% of enterprises had a visible, defined AI governance strategy in 2025, according to BetterCloud's industry analysis. AI investment was accelerating. Governance was not keeping pace.

The Deloitte State of AI 2026 report, which surveyed 3,235 senior leaders across 24 countries, found that enterprises where senior leadership actively shapes AI governance achieve significantly greater business value than those that delegate AI governance solely to technical teams. True governance, Deloitte found, makes oversight everyone's role, not a parallel function sitting beside the business. The same report documented cases in which AI leaders discovered that models had been deployed to production with no formal oversight. In at least one instance, no inventory of active AI tools existed within the organization because development had proceeded without centralized tracking of what was running.

Anthropic's Project Glasswing, announced in April 2026, found thousands of previously unknown vulnerabilities across every major operating system and web browser, including flaws that survived decades of human review. The software stack enterprises are trying to govern is already more fragile than traditional security practices assumed it would be. Adding untracked AI features to that picture does not create a new category of risk. It makes an existing one harder to contain.

What closing the gap actually requires

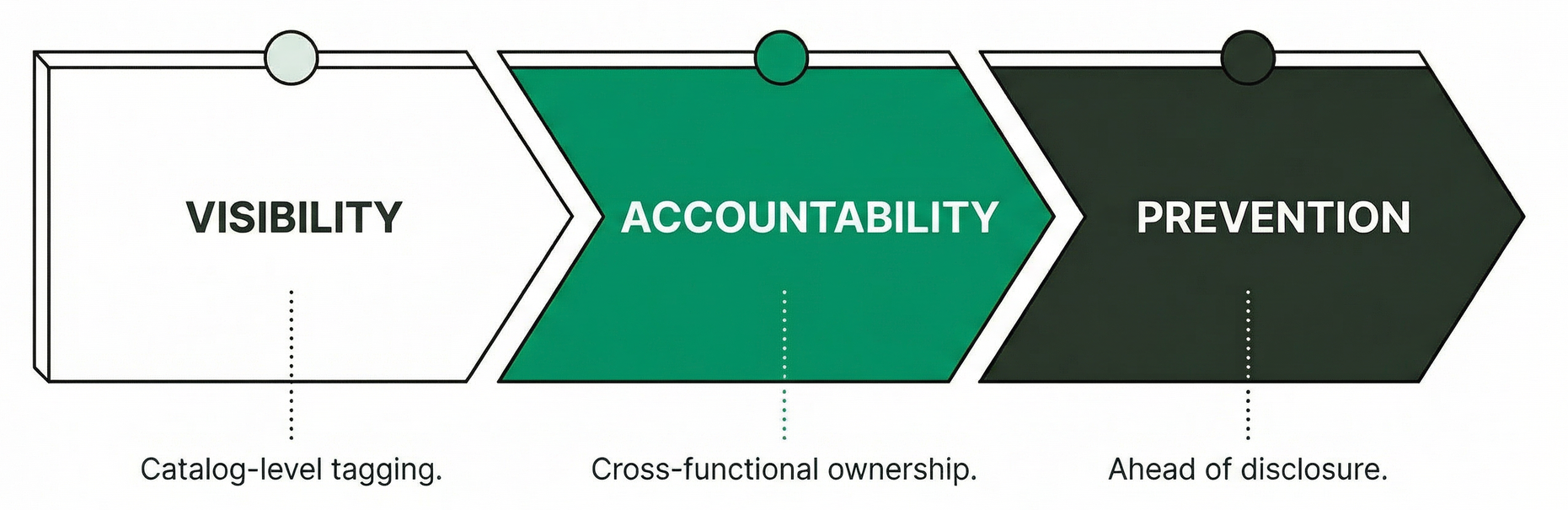

An AI governance strategy is not a policy document. It is a living inventory, actively maintained, with a clear answer to a simple question: which tools in our stack have AI capabilities, what data do those capabilities touch, and who approved them?

That means tagging AI capabilities at the catalog level, verified rather than inferred from vendor marketing. It means distinguishing between approved tools and tools that merely use AI, and building a review process that triggers when vendors ship new AI features, not just when a new tool enters the stack. It also means continuous monitoring for model drift, because the same tool, on the same contract, can behave differently in September than it did in January, with no notification and no audit trail unless one is deliberately built.

It also means treating all three vectors as governance problems, not just the first one.

For expense-card purchases, the starting point is spend visibility. Finance and IT teams reviewing SaaS charges through corporate cards can flag AI-native tools and route them through a lightweight approval process before renewal. Usage-based billing anomalies, sudden spikes in API consumption, or per-seat AI add-ons are often the first signal that a team has quietly adopted something outside the catalog.

For personal accounts, the approach shifts from procurement to policy and detection. Network monitoring and cloud access security brokers can surface AI tool traffic that bypasses IT channels. More practically, a clear acceptable use policy, one that employees actually see rather than one buried in an AUP, sets the baseline. The goal is to redirect AI use toward tools the organization has actually evaluated, not block it entirely.

And critically, governance requires the accountability layer that AI disclaimers do not provide. Who is responsible when an AI output drives a wrong decision? That question needs an answer before the incident, not after. Enterprise-level AI governance requires the same transparency and audit trails that IT procurement reviews demand from traditional software, applied to a technology that currently operates without them. For AI specifically, that means versioning and audit trails that track how models evolve over time, so when a tool's behavior changes mid-contract, there is a record of what changed and when. Across frameworks, from Workday to Deloitte, the practical recommendation is the same: governance should involve security teams, risk officers, data scientists, and legal working together, not a technical committee in isolation. The 2025 SaaS Management Index found that 93% of IT leaders have data security concerns about AI tools. That concern needs an owner, a process, and a place in the org chart.

All three vectors are solvable. None of them is solved by policy alone. Visibility has to come first.

The organizations that start with visibility and work toward accountability will be ahead of the next compliance requirement, not scrambling to respond to it.

Where this landed in practice

The Fortune 500 request at the start of this post ended with a specific request: use Teem to flag every product in their software catalog with AI or LLM capabilities, so they could begin building an approved AI tools list and move users away from unsanctioned accounts.

That covers vector one, software under contract. Teem's AI capability tagging identifies which tools in an enterprise software catalog have AI or LLM capabilities, providing procurement and IT with a verified starting point for governance. The other two vectors require different approaches but are not ungovernable. The catalog is where most organizations have the clearest ownership and the most immediate ability to act.

Most organizations assume AI governance is a deployment problem. It is a discovery problem. You cannot govern what you have not found, and finding it starts with knowing what you already contracted for.